American Capitalism's Great Crisis

American Capitalism’s Great Crisis

// The Curious Capitalist

How Wall Street is choking our economy and how to fix it

Over the past few decades, finance has turned away from this traditional role. Academic research shows that only a fraction of all the money washing around the financial markets these days actually makes it to Main Street businesses. “The intermediation of household savings for productive investment in the business sector—the textbook description of the financial sector—constitutes only a minor share of the business of banking today,” according to academics Oscar Jorda, Alan Taylor and Moritz Schularick, who’ve studied the issue in detail. By their estimates and others, around 15% of capital coming from financial institutions today is used to fund business investments, whereas it would have been the majority of what banks did earlier in the 20th century.

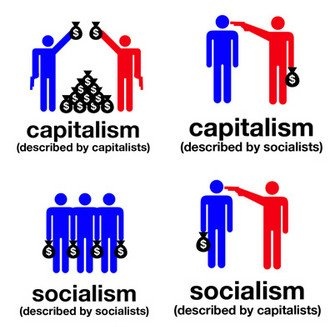

This represents more than just millennials not minding the label “socialist” or disaffected middle-aged Americans tiring of an anemic recovery. This is a majority of citizens being uncomfortable with the country’s economic foundation—a system that over hundreds of years turned a fledgling society of farmers and prospectors into the most prosperous nation in human history. To be sure, polls measure feelings, not hard market data. But public sentiment reflects day-to-day economic reality. And the data (more on that later) shows Americans have plenty of concrete reasons to question their system.

This crisis of faith has had no more severe expression than the 2016 presidential campaign, which has turned on the questions of who, exactly, the system is working for and against, as well as why eight years and several trillions of dollars of stimulus on from the financial crisis, the economy is still growing so slowly. All the candidates have prescriptions: Sanders talks of breaking up big banks; Trump says hedge funders should pay higher taxes; Clinton wants to strengthen existing financial regulation. In Congress, Republican House Speaker Paul Ryan remains committed to less regulation.

All of them are missing the point. America’s economic problems go far beyond rich bankers, too-big-to-fail financial institutions, hedge-fund billionaires, offshore tax avoidance or any particular outrage of the moment. In fact, each of these is symptomatic of a more nefarious condition that threatens, in equal measure, the very well-off and the very poor, the red and the blue. The U.S. system of market capitalism itself is broken. That problem, and what to do about it, is at the center of my book Makers and Takers: The Rise of Finance and the Fall of American Business, a three-year research and reporting effort from which this piece is adapted.

To understand how we got here, you have to understand the relationship between capital markets—meaning the financial system—and businesses. From the creation of a unified national bond and banking system in the U.S. in the late 1790s to the early 1970s, finance took individual and corporate savings and funneled them into productive enterprises, creating new jobs, new wealth and, ultimately, economic growth. Of course, there were plenty of blips along the way (most memorably the speculation leading up to the Great Depression, which was later curbed by regulation). But for the most part, finance—which today includes everything from banks and hedge funds to mutual funds, insurance firms, trading houses and such—essentially served business. It was a vital organ but not, for the most part, the central one.

Over the past few decades, finance has turned away from this traditional role. Academic research shows that only a fraction of all the money washing around the financial markets these days actually makes it to Main Street businesses. “The intermediation of household savings for productive investment in the business sector—the textbook description of the financial sector—constitutes only a minor share of the business of banking today,” according to academics Oscar Jorda, Alan Taylor and Moritz Schularick, who’ve studied the issue in detail. By their estimates and others, around 15% of capital coming from financial institutions today is used to fund business investments, whereas it would have been the majority of what banks did earlier in the 20th century.

“The trend varies slightly country by country, but the broad direction is clear,” says Adair Turner, a former British banking regulator and now chairman of the Institute for New Economic Thinking, a think tank backed by George Soros, among others. “Across all advanced economies, and the United States and the U.K. in particular, the role of the capital markets and the banking sector in funding new investment is decreasing.” Most of the money in the system is being used for lending against existing assets such as housing, stocks and bonds.

To get a sense of the size of this shift, consider that the financial sector now represents around 7% of the U.S. economy, up from about 4% in 1980. Despite currently taking around 25% of all corporate profits, it creates a mere 4% of all jobs. Trouble is, research by numerous academics as well as institutions like the Bank for International Settlements and the International Monetary Fund shows that when finance gets that big, it starts to suck the economic air out of the room. In fact, finance starts having this adverse effect when it’s only half the size that it currently is in the U.S. Thanks to these changes, our economy is gradually becoming “a zero-sum game between financial wealth holders and the rest of America,” says former Goldman Sachs banker Wallace Turbeville, who runs a multiyear project on the rise of finance at the New York City—based nonprofit Demos.

It’s not just an American problem, either. Most of the world’s leading market economies are grappling with aspects of the same disease. Globally, free-market capitalism is coming under fire, as countries across Europe question its merits and emerging markets like Brazil, China and Singapore run their own forms of state-directed capitalism. An ideologically broad range of financiers and elite business managers—Warren Buffett, BlackRock’s Larry Fink, Vanguard’s John Bogle, McKinsey’s Dominic Barton, Allianz’s Mohamed El-Erian and others—have started to speak out publicly about the need for a new and more inclusive type of capitalism, one that also helps businesses make better long-term decisions rather than focusing only on the next quarter. The Pope has become a vocal critic of modern market capitalism, lambasting the “idolatry of money and the dictatorship of an impersonal economy” in which “man is reduced to one of his needs alone: consumption.”

During my 23 years in business and economic journalism, I’ve long wondered why our market system doesn’t serve companies, workers and consumers better than it does. For some time now, finance has been thought by most to be at the very top of the economic hierarchy, the most aspirational part of an advanced service economy that graduated from agriculture and manufacturing. But research shows just how the unintended consequences of this misguided belief have endangered the very system America has prided itself on exporting around the world.

America’s economic illness has a name: financialization. It’s an academic term for the trend by which Wall Street and its methods have come to reign supreme in America, permeating not just the financial industry but also much of American business. It includes everything from the growth in size and scope of finance and financial activity in the economy; to the rise of debt-fueled speculation over productive lending; to the ascendancy of shareholder value as the sole model for corporate governance; to the proliferation of risky, selfish thinking in both the private and public sectors; to the increasing political power of financiers and the CEOs they enrich; to the way in which a “markets know best” ideology remains the status quo. Financialization is a big, unfriendly word with broad, disconcerting implications.

University of Michigan professor Gerald Davis, one of the pre-eminent scholars of the trend, likens financialization to a “Copernican revolution” in which business has reoriented its orbit around the financial sector. This revolution is often blamed on bankers. But it was facilitated by shifts in public policy, from both sides of the aisle, and crafted by the government leaders, policymakers and regulators entrusted with keeping markets operating smoothly. Greta Krippner, another University of Michigan scholar, who has written one of the most comprehensive books on financialization, believes this was the case when financialization began its fastest growth, in the decades from the late 1970s onward. According to Krippner, that shift encompasses Reagan-era deregulation, the unleashing of Wall Street and the rise of the so-called ownership society that promoted owning property and further tied individual health care and retirement to the stock market.

The changes were driven by the fact that in the 1970s, the growth that America had enjoyed following World War II began to slow. Rather than make tough decisions about how to bolster it (which would inevitably mean choosing among various interest groups), politicians decided to pass that responsibility to the financial markets. Little by little, the Depression-era regulation that had served America so well was rolled back, and finance grew to become the dominant force that it is today. The shifts were bipartisan, and to be fair they often seemed like good ideas at the time; but they also came with unintended consequences. The Carter-era deregulation of interest rates—something that was, in an echo of today’s overlapping left-and right-wing populism, supported by an assortment of odd political bedfellows from Ralph Nader to Walter Wriston, then head of Citibank—opened the door to a spate of financial “innovations” and a shift in bank function from lending to trading. Reaganomics famously led to a number of other economic policies that favored Wall Street. Clinton-era deregulation, which seemed a path out of the economic doldrums of the late 1980s, continued the trend. Loose monetary policy from the Alan Greenspan era onward created an environment in which easy money papered over underlying problems in the economy, so much so that it is now chronically dependent on near-zero interest rates to keep from falling back into recession.

This sickness, not so much the product of venal interests as of a complex and long-term web of changes in government and private industry, now manifests itself in myriad ways: a housing market that is bifurcated and dependent on government life support, a retirement system that has left millions insecure in their old age, a tax code that favors debt over equity. Debt is the lifeblood of finance; with the rise of the securities-and-trading portion of the industry came a rise in debt of all kinds, public and private. That’s bad news, since a wide range of academic research shows that rising debt and credit levels stoke financial instability. And yet, as finance has captured a greater and greater piece of the national pie, it has, perversely, all but ensured that debt is indispensable to maintaining any growth at all in an advanced economy like the U.S., where 70% of output is consumer spending. Debt-fueled finance has become a saccharine substitute for the real thing, an addiction that just gets worse. (The amount of credit offered to American consumers has doubled in real dollars since the 1980s, as have the fees they pay to their banks.)

As the economist Raghuram Rajan, one of the most prescient seers of the 2008 financial crisis, argues, credit has become a palliative to address the deeper anxieties of downward mobility in the middle class. In his words, “let them eat credit” could well summarize the mantra of the go-go years before the economic meltdown. And things have only deteriorated since, with global debt levels $57 trillion higher than they were in 2007.

The rise of finance has also distorted local economies. It’s the reason rents are rising in some communities where unemployment is still high. America’s housing market now favors cash buyers, since banks are still more interested in making profits by trading than by the traditional role of lending out our savings to people and businesses looking to make longterm investments (like buying a house), ensuring that younger people can’t get on the housing ladder. One perverse result: Blackstone, a private-equity firm, is currently the largest single-family-home landlord in America, since it had the money to buy properties up cheap in bulk following the financial crisis. It’s at the heart of retirement insecurity, since fees from actively managed mutual funds “are likely to confiscate as much as 65% or more of the wealth that … investors could otherwise easily earn,” as Vanguard founder Bogle testified to Congress in 2014.

It’s even the reason companies in industries from autos to airlines are trying to move into the business of finance themselves. American companies across every sector today earn five times the revenue from financial activities—investing, hedging, tax optimizing and offering financial services, for example—that they did before 1980. Traditional hedging by energy and transport firms, for example, has been overtaken by profit-boosting speculation in oil futures, a shift that actually undermines their core business by creating more price volatility. Big tech companies have begun underwriting corporate bonds the way Goldman Sachs does. And top M.B.A. programs would likely encourage them to do just that; finance has become the center of all business education.

Washington, too, is so deeply tied to the ambassadors of the capital markets—six of the 10 biggest individual political donors this year are hedge-fund barons—that even well-meaning politicians and regulators don’t see how deep the problems are. When I asked one former high-level Obama Administration Treasury official back in 2013 why more stakeholders aside from bankers hadn’t been consulted about crafting the particulars of Dodd-Frank financial reform (93% of consultation on the Volcker Rule, for example, was taken with the financial industry itself), he said, “Who else should we have talked to?” The answer—to anybody not profoundly influenced by the way finance thinks—might have been the people banks are supposed to lend to, or the scholars who study the capital markets, or the civic leaders in communities decimated by the financial crisis.

Of course, there are other elements to the story of America’s slow-growth economy, including familiar trends from globalization to technology-related job destruction. These are clearly massive challenges in their own right. But the single biggest unexplored reason for long-term slower growth is that the financial system has stopped serving the real economy and now serves mainly itself. A lack of real fiscal action on the part of politicians forced the Fed to pump $4.5 trillion in monetary stimulus into the economy after 2008. This shows just how broken the model is, since the central bank’s best efforts have resulted in record stock prices (which enrich mainly the wealthiest 10% of the population that owns more than 80% of all stocks) but also a lackluster 2% economy with almost no income growth.

Now, as many top economists and investors predict an era of much lower asset-price returns over the next 30 years, America’s ability to offer up even the appearance of growth—via financially oriented strategies like low interest rates, more and more consumer credit, tax-deferred debt financing for businesses, and asset bubbles that make people feel richer than we really are, until they burst—is at an end.

This pinch is particularly evident in the tumult many American businesses face. Lending to small business has fallen particularly sharply, as has the number of startup firms. In the early 1980s, new companies made up half of all U.S. businesses. For all the talk of Silicon Valley startups, the number of new firms as a share of all businesses has actually shrunk. From 1978 to 2012 it declined by 44%, a trend that numerous researchers and even many investors and businesspeople link to the financial industry’s change in focus from lending to speculation. The wane in entrepreneurship means less economic vibrancy, given that new businesses are the nation’s foremost source of job creation and GDP growth. Buffett summed it up in his folksy way: “You’ve now got a body of people who’ve decided they’d rather go to the casino than the restaurant” of capitalism.

In lobbying for short-term share-boosting management, finance is also largely responsible for the drastic cutback in research-and-development outlays in corporate America, investments that are seed corn for future prosperity. Take share buybacks, in which a company—usually with some fanfare—goes to the stock market to purchase its own shares, usually at the top of the market, and often as a way of artificially bolstering share prices in order to enrich investors and executives paid largely in stock options. Indeed, if you were to chart the rise in money spent on share buybacks and the fall in corporate spending on productive investments like R&D, the two lines make a perfect X. The former has been going up since the 1980s, with S&P 500 firms now spending $1 trillion a year on buybacks and dividends—equal to about 95% of their net earnings—rather than investing that money back into research, product development or anything that could contribute to long-term company growth. No sector has been immune, not even the ones we think of as the most innovative. Many tech firms, for example, spend far more on share-price boosting than on R&D as a whole. The markets penalize them when they don’t. One case in point: back in March 2006, Microsoft announced major new technology investments, and its stock fell for two months. But in July of that same year, it embarked on $20 billion worth of stock buying, and the share price promptly rose by 7%. This kind of twisted incentive for CEOs and corporate officers has only grown since.

As a result, business dynamism, which is at the root of economic growth, has suffered. The number of new initial public offerings (IPOs) is about a third of what it was 20 years ago. True, the dollar value of IPOs in 2014 was $74.4 billion, up from $47.1 billion in 1996. (The median IPO rose to $96 million from $30 million during the same period.) This may show investors want to make only the surest of bets, which is not necessarily the sign of a vibrant market. But there’s another, more disturbing reason: firms simply don’t want to go public, lest their work become dominated by playing by Wall Street’s rules rather than creating real value.

An IPO—a mechanism that once meant raising capital to fund new investment—is likely today to mark not the beginning of a new company’s greatness, but the end of it. According to a Stanford University study, innovation tails off by 40% at tech companies after they go public, often because of Wall Street pressure to keep jacking up the stock price, even if it means curbing the entrepreneurial verve that made the company hot in the first place.

A flat stock price can spell doom. It can get CEOs canned and turn companies into acquisition fodder, which often saps once innovative firms. Little wonder, then, that business optimism, as well as business creation, is lower than it was 30 years ago, or that wages are flat and inequality growing. Executives who receive as much as 82% of their compensation in stock naturally make shorter-term business decisions that might undermine growth in their companies even as they raise the value of their own options.

It’s no accident that corporate stock buybacks, corporate pay and the wealth gap have risen concurrently over the past four decades. There are any number of studies that illustrate this type of intersection between financialization and inequality. One of the most striking was by economists James Galbraith and Travis Hale, who showed how during the late 1990s, changing income inequality tracked the go-go Nasdaq stock index to a remarkable degree.

Recently, this pattern has become evident at a number of well-known U.S. companies. Take Apple, one of the most successful over the past 50 years. Apple has around $200 billion sitting in the bank, yet it has borrowed billions of dollars cheaply over the past several years, thanks to superlow interest rates (themselves a response to the financial crisis) to pay back investors in order to bolster its share price. Why borrow? In part because it’s cheaper than repatriating cash and paying U.S. taxes. All the financial engineering helped boost the California firm’s share price for a while. But it didn’t stop activist investor Carl Icahn, who had manically advocated for borrowing and buybacks, from dumping the stock the minute revenue growth took a turn for the worse in late April.

It is perhaps the ultimate irony that large, rich companies like Apple are most involved with financial markets at times when they don’t need any financing. Top-tier U.S. businesses have never enjoyed greater financial resources. They have a record $2 trillion in cash on their balance sheets—enough money combined to make them the 10th largest economy in the world. Yet in the bizarre order that finance has created, they are also taking on record amounts of debt to buy back their own stock, creating what may be the next debt bubble to burst.

You and I, whether we recognize it or not, are also part of a dysfunctional ecosystem that fuels short-term thinking in business. The people who manage our retirement money—fund managers working for asset-management firms—are typically compensated for delivering returns over a year or less. That means they use their financial clout (which is really our financial clout in aggregate) to push companies to produce quick-hit results rather than execute long-term strategies. Sometimes pension funds even invest with the activists who are buying up the companies we might work for—and those same activists look for quick cost cuts and potentially demand layoffs.

It’s a depressing state of affairs, no doubt. Yet America faces an opportunity right now: a rare second chance to do the work of refocusing and right-sizing the financial sector that should have been done in the years immediately following the 2008 crisis. And there are bright spots on the horizon.

Despite the lobbying power of the financial industry and the vested interests both in Washington and on Wall Street, there’s a growing push to put the financial system back in its rightful place, as a servant of business rather than its master. Surveys show that the majority of Americans would like to see the tax system reformed and the government take more direct action on job creation and poverty reduction, and address inequality in a meaningful way. Each candidate is crafting a message around this, which will keep the issue front and center through November.

The American public understands just how deeply and profoundly the economic order isn’t working for the majority of people. The key to reforming the U.S. system is comprehending why it isn’t working.

Remooring finance in the real economy isn’t as simple as splitting up the biggest banks (although that would be a good start). It’s about dismantling the hold of financial-oriented thinking in every corner of corporate America. It’s about reforming business education, which is still permeated with academics who resist challenges to the gospel of efficient markets in the same way that medieval clergy dismissed scientific evidence that might challenge the existence of God. It’s about changing a tax system that treats one-year investment gains the same as longer-term ones, and induces financial institutions to push overconsumption and speculation rather than healthy lending to small businesses and job creators. It’s about rethinking retirement, crafting smarter housing policy and restraining a money culture filled with lobbyists who violate America’s essential economic principles.

It’s also about starting a bigger conversation about all this, with a broader group of stakeholders. The structure of American capital markets and whether or not they are serving business is a topic that has traditionally been the sole domain of “experts”—the financiers and policymakers who often have a self-interested perspective to push, and who do so in complicated language that keeps outsiders out of the debate. When it comes to finance, as with so many issues in a democratic society, complexity breeds exclusion.

Finding solutions won’t be easy. There are no silver bullets, and nobody really knows the perfect model for a high-functioning, advanced market system in the 21st century. But capitalism’s legacy is too long, and the well-being of too many people is at stake, to do nothing in the face of our broken status quo. Neatly packaged technocratic tweaks cannot fix it. What is required now is lifesaving intervention.

Crises of faith like the one American capitalism is currently suffering can be a good thing if they lead to re-examination and reaffirmation of first principles. The right question here is in fact the simplest one: Are financial institutions doing things that provide a clear, measurable benefit to the real economy? Sadly, the answer at the moment is mostly no. But we can change things. Our system of market capitalism wasn’t handed down, in perfect form, on stone tablets. We wrote the rules. We broke them. And we can fix them.